Testing the Future of AI: What AI Sandboxes Means for Innovation in Health Care

Artificial intelligence (AI) sandboxes and regulatory mitigation programs are in the spotlight following increased policymaker and industry interest in these tools as pathways to test rapidly evolving AI technologies for uses that would otherwise be prohibited. Emerging state initiatives and federal proposals reflect growing consensus that sandboxes provide a valuable tool to balance structured experimentation, appropriate oversight and regulatory learning. We expect AI Sandboxes and regulatory mitigation programs to be the focus of much discussion and proliferate at the state and federal level in 2026.

For details and information on specific state or federal policies, please contact , or .

What They Are:

AI sandboxes are structured programs that allow developers of AI systems to test or deploy AI systems for a limited period in a controlled environment under regulator oversight to generate evidence and inform future policy. AI regulatory relief programs are similarly structured, time-limited programs that provide targeted relief from select state laws or regulatory requirements under defined oversight for the purpose of evaluating system performance, risks and appropriate regulatory treatment. This testing, however, occurs in the real world and not in a controlled environment. We note that some states and federal legislation use the term “Sandbox” to refer to programs that are actually regulatory relief programs. For the purposes of this article, we refer to both programs using the term “AI Sandboxes” unless a state has defined a different term.

State Action:

Three states have already established an AI Sandbox or Regulatory Relief through state law:

Utah:

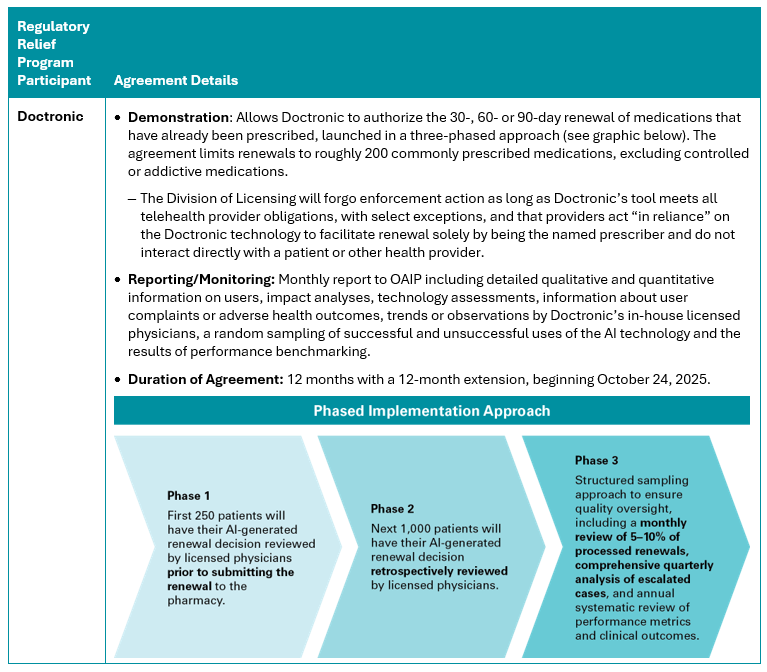

Created its . has launched the Learning Lab and entered into three regulatory relief agreements related to health care: (details below), and .

AI developers approved for regulatory relief must have an agreement with OAIP and the relevant agency whose regulations will not be enforced under the agreement.

Utah has expressed that that Doctronic’s focus on prescription renewals (particularly for chronic conditions and lower-risk medications) appealed to the state as an initial test case, because refills are administratively burdensome on providers and improve care through increased medication adherence bypatients. Therefore, the AI tool’s focus has a low clinical risk, strong public health relevance and lower clinical risk profile. Additional areas of interest for Utah include diagnostic decision support, new prescription initiation, mental health support and specialty care pathways. The state has emphasized that the phased rollout of Doctronic’s tool aims to build trust incrementally, noting the interim reporting, transparency and public updates.

Texas:

House Bill ( created an AI regulatory relief program which it calls a sandbox that provides legal protection and limited market access for testing AI systems without requiring licenses or other regulatory authorizations. The program is intended to promote the safe and innovative AI use across sectors, including health care.

Participation requires approval from the Texas Department of Information Resources and any applicable agencies. Applications must include:

- The AI system and intended use;

- A benefit assessment (including consumer, privacy, and public safety impacts);

- A risk mitigation plan; and

- Proof of compliance with applicable federal AI laws.

Delaware:

directs the Delaware to work with the Secretary of State on draft legislation to establish an AI “regulatory sandbox framework for the testing of innovative and novel technologies that utilize agentic artificial intelligence.” As of January 2026, the AI Commission has established an Agentic AI Regulatory Sandbox Subcommittee, which holds regular public meetings, and Delaware’s Secretary of State established a workgroup to investigate a potential structure for a regulatory sandbox. The Subcommittee is actively refining draft legislation to establish the program.

Looking Ahead for States:

As of the publication of this article, and have already introduced or pre-filed for the 2026 state legislative cycles to create regulatory relief sandboxes.

We may see states reintroduce bills from 2025 that did not pass, including: Oklahoma , Pennsylvania and Ohio (note: HB 176 is not AI-specific).

Federal Action:

The federal government is taking notice of Sandboxes as a potential tool to balance innovation with consumer protection with programs like , which included recommendations to establishregulatory sandboxes or AI Centers of Excellence around the country, and Senator Cruz’s (R–Texas) . Under the proposed regulator relief SANDBOX Act, AI developers may request waivers from federal regulations, including importantly for healthcare providers, the Food and Drug Administration (FDA) approval requirements. However, the bill did not progress in 2025.

Separately, on December 52005, the FDA published a regulatory notice announcing the Technology-Enabled Meaningful Patient Outcomes (TEMPO) Pilot in connection with the Center for Medicare and Medicaid Innovation (CMMI) Advancing Chronic Care with Effective, Scalable Solutions (ACCESS) Model. The TEMPO and ACCESS models aim to expand access to innovative digital health devices. While the FDA expressed that it generally expects devices used under the CMMI ACCESS model are authorized, FDA opened a “de facto” regulatory relief pathway for selected participants that have not yet received FDA authorization for their tool. Under TEMPO, United States-based manufacturers selected for participation (up to 40 total manufacturers, with ten per clinical use case) may offer their devices to ACCESS participants without FDA premarket authorization for improving patient outcomes while care is covered by the model. For a detailed overview of the TEMPO Pilot, see Manatt on Health article .

How Stakeholders Should Get Involved:

AI Innovators:

- Consider participating in those programs, if eligible and appropriate for their technology.

- Advocate for the creation of AI Sandboxes in additional states or at a federal level and consider advocating for reciprocity across Sandbox programs if approved by one state that as similar requirements for applicants

- For Sandbox participation, have strong evidence supporting their product regarding how they evaluate it for safety and efficacy and ongoing monitoring is employed as part of their robust governance processes. In addition, make a compelling case regarding how AI is improving quality or safety or access to care, such as through serving patients in health care shortage areas or freeing up providers to provide more complex services or demonstrate how their product decreases cost without compromising safety or quality.

Health Systems:

In addition to the above, health systems should consider:

- If deploying or integrating an AI tool authorized as a part of a state’s AI Sandbox, ensure that providers are trained on and aware of the appropriate use of the AI tool and that patients receive required disclosures.

- Advocate for a role in the evaluation of clinically focused AI tools operating in an AI Sandbox, informing future iterations of AI tools and informing state and federal regulatory approaches to health care AI tools.

- Propose flexibilities that benefit health systems and provider organizations on equal footing with health tech companies.

Policymakers:

AI Sandboxes can provide policymakers with real-world data, examples and insights to inform balanced regulation of the emerging AI industry. Policymakers should consider whether their state may benefit from establishing an AI Sandbox and, if so, consider alignment with existing AI Sandbox programs. Alignment across state AI Sandbox policies may enable policymakers to establish reciprocity across states with similar Sandbox programs and reduce fragmentation.

Policymakers also play a critical role in building public trust and awareness of AI sandbox programs through disclosure and reporting requirements, engagement with academic and research partners and proactive public communications. At the same time, these efforts should be paired with appropriate guardrails to ensure the Sandbox remains a safe learning environment in which companies can candidly discuss challenges and failures.

Other states have established sandbox or regulatory relief programs for financial AI innovations but those are not available for healthcare stakeholders at this time.

The Delaware Artificial Intelligence Commission was established by in 2024.